I wanted to know how I could monitor file system changes, especially activities on my home directory, and this was about this thread. I was thinking the issue could be solved by generating a snapshot of files using find, then watching changes and making changes to the snapshot file. Using grep to do the search.

But, thats simply stupid. Just using find would do that OP wants since that program OP uses only search for file names and folder names. For the first run of find it might be slower, 31000 more files took about six seconds. Well its not really slow. The consecutive runs only take about 0.1 seconds even after you add or delete files.

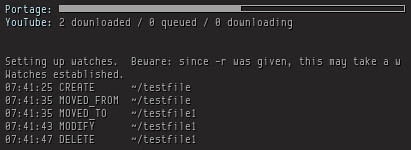

Anyway, its still fun to know how to monitor files. I installed inotify-tools package for inotifywait program.

Here is the script I use:

inotifywait -m -r --format $'%T %e %w%f' --timefmt '%H:%M:%S' --exclude ~/'(\.mozilla|Documents/KeepNote)' -e modify -e move -e create -e delete ~ 2>&1 | awk '/^[0-9]/ {

sub(/'"${HOME//\//\\/}"'/, "~", $0)

split($0, a, " ")

len=length(a[1])+length(a[2])+1

printf "%-20s %s\n", substr($0, 0, len), substr($0, len+2)

// flush stdout

system("")

next

}

{print ; system("")}

' | tee -a /tmp/home_monitor

-m and -r are for recursively monitoring on files. I set up the output format and timestamp format. I exclude two things from being listed, one is ~/.mozilla and another is KeepNotes files. You can only have one --exclude supplied, if you have more than one, then only the last one would be effective. You have to group them up. -e specify events you want to monitor.

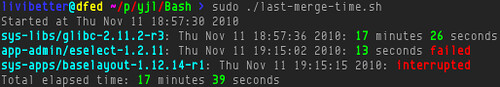

I use awk to do format adjustment, column alignment. Also replacing the literal of your home directory, /home/username, with just ~. Then I pipe the output to tee, so I can see on screen and write output to file at the same time. This way, Conky can ${tail /tmp/home_monitor 10} the file.

I was planning to use named pipe:

% MPIPE=/tmp/home_monitor

$ mkfifo $MPIPE

$ inotifywait ... | awk ... >$MPIPE

$ tail -f $MPIPE

It will save some disk space, but it doesnt work with Conky.

If you want to clean up /tmp/home_monitor when it gets too big, just do echo '' > /tmp/home_monitor. An important note for you, when you tail a file in Conky, make sure it exists. If you remove the file, Conky exits. I was hoping there was a Conky variable would run program in a thread and do similar task as $tail does, only the input is the standard output of that thread not a file.

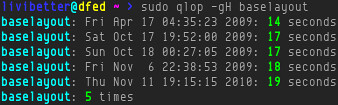

By the way, I had never thought even in my own home directory would have many activities.